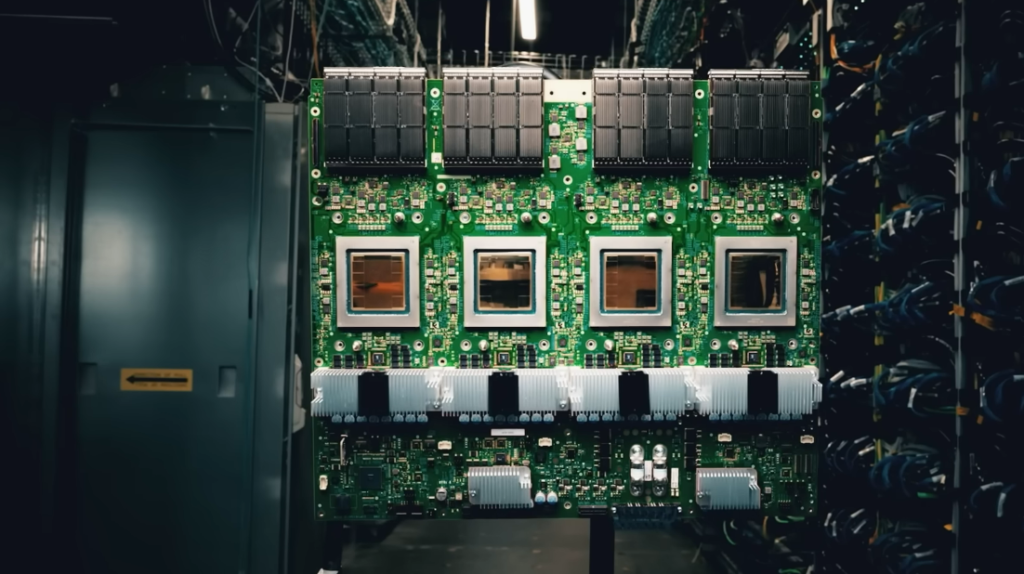

Every Nvidia H100 has a flaw that isn’t mentioned in the marketing materials. The chip itself is remarkable; it is an engineering marvel that performs calculations at speeds that would have seemed unattainable ten years ago. However, memory—more especially, the High Bandwidth Memory stacked around it—accounts for about half of its manufacturing costs, and that memory is barely keeping up. Because the processor is so quick, a significant amount of its time is spent waiting for data that the memory system is unable to deliver quickly enough. This is known as the memory wall, and it is arguably the most significant bottleneck in contemporary computing.

The expression itself is outdated. It was first used in 1994 by researchers to characterize what they could already predict: processor speeds were increasing more quickly than memory systems’ capacity to supply those processors with data. AI has transformed memory bandwidth from an engineering annoyance into an existential constraint on one of the world’s most capital-intensive industries, so thirty years later, the observation is still true, the gap has grown significantly, and the stakes have skyrocketed.

There was a golden age for DRAM, the working memory that computers use to swiftly access data. During its heyday, DRAM density doubled every 18 months, roughly matching Moore’s Law for logic chips. Over the course of two decades, the price per bit decreased by three orders of magnitude. Korean businesses eventually overtook Japanese manufacturers. The scaling was unrelenting and appeared to go on forever. However, by the end of the previous decade, DRAM density had slowed to about a 2x improvement every ten years due to the harsh physics of shrinking capacitors and sense amplifiers at sub-10-nanometer dimensions. There was now a chasm between what memory could provide and what processors could compute.

| Category | Details |

|---|---|

| Concept | The Memory Wall — the growing gap between processor speed and memory bandwidth/access rates |

| Term Origin | First coined in 1994 to describe processor performance outpacing memory interconnect bandwidth |

| DRAM Scaling Problem | DRAM density has increased only ~2x in the last decade; peak was doubling every 18 months |

| HBM Cost | High Bandwidth Memory (HBM) costs 3x or more per GB than standard DDR5 |

| H100 Memory Cost Share | ~50%+ of H100 manufacturing cost attributed to HBM; rises to ~60%+ with Blackwell |

| AI Model Size Growth | AI model parameters began dramatically outpacing processor performance around 2019 |

| Current HBM Specs | HBM3E: 256-bit bus per die, 8+ stacked dies, up to 1024-bit total bandwidth |

| Energy Inefficiency | DDR5 DIMMs expend 99%+ of read/write energy in the host controller and interface |

| Bank Potential vs. Reality | Single DRAM chip theoretical throughput: ~4TB/s; actual HBM delivers ~256 GB/s per die |

| Emerging Solutions | Compute-in-Memory (CIM), CXL memory expansion, 3D DRAM, FeRAM, MRAM, vertical channel transistors |

| Market Concentration | Top 3 DRAM suppliers (Samsung, SK Hynix, Micron) control 95%+ of global market |

| University Research | Argonne National Laboratory’s ACCESS (led by Venkat Srinivasan) — exploring alternative memory architectures |

| Reference Links | SemiAnalysis — The Memory Wall: Past, Present, and Future of DRAM · EE World Online — HPC Memory Wall Explained |

That gap felt like a crisis because of the AI boom. Large language models’ memory requirements increased more quickly than any previous computing application when they started to scale significantly around 2019. By themselves, model weights are getting close to multi-terabyte scale. Large volumes of data must be moved between memory and computation at speeds that put stress on every system component in order to train these models. The industry’s best current solution is HBM, which is specialized memory stacked vertically alongside AI accelerators. However, it is costly, produces poorly at scale, and gets more physically complex with each generation. Samsung has been having trouble with HBM3E yields. SK Hynix is in a better position. Micron is making progress. The underlying issue is still unresolved, the margins are large, and the competition is fierce.

When you look at the numbers, it’s difficult to ignore how ridiculous the situation is. Within its internal banks, a single DRAM chip can theoretically process up to 4 terabytes per second. Approximately 256 gigabytes per second per die are delivered to the outside world by the HBM3E package built around those chips, which is about sixteenth of what the underlying hardware could possibly provide. The remainder is lost in the interface, in the architectural limitations built into DRAM since its creation in the 1970s, and in a half-duplex communication model that can only read or write at any given time—not both at once. According to one estimate, more than 99% of the energy used in a DDR5 read or write operation is consumed by the processor’s interface with memory. Almost nothing is accounted for by the actual data movement within the memory cell.

The solutions being developed range from modest to truly ambitious. In the near future, the industry is aiming for vertical channel transistors and 4F2 cell layouts, which are architectural modifications that can slightly increase density without increasing the basic feature size. Expected in the coming years, HBM4 will expand the data bus to 2048 bits per stack and move TSMC’s base die fabrication to FinFET processes. This will allow for the development of custom logic designs that may be able to alleviate some of the interface inefficiencies. A more fundamental solution is provided by compute-in-memory techniques, which transfer processing logic directly onto the memory chip instead of passing everything through an external controller. However, these approaches necessitate manufacturing modifications that the DRAM industry has opposed for decades. A route toward memory expansion and sharing across processors that may alleviate some capacity pressure without the need for new memory chemistries is provided by the CXL standard, an emerging memory interconnect that expands the PCIe physical layer.

Longer-term substitutes, such as magnetic RAM and ferroelectric RAM, have shown promise for years but have not yet reached high-volume production. Yield, cost, and the difficulty of dislodging a commodity with established supply chains are more often the obstacles than the physics. Reliability is the first, second, and third requirement in HPC applications, according to Venkat Srinivasan of Argonne National Laboratory. Before trusting a product, utilities want ten years of data. Despite their potential, new memory types begin at zero on that count.

The memory wall is a long-standing issue that will take time to resolve. The AI industry now spends hundreds of billions of dollars a year on systems where this bottleneck directly limits performance, so there is a greater incentive than ever to find a solution. It is still genuinely unclear whether this pressure pushes compute-in-memory from research to production, speeds up a real breakthrough in DRAM scaling, or just encourages more improvement in HBM packaging. However, the gap is growing, the wall is real, and the processors are waiting.